Why can't we build and reuse AI models? More data, more problems? Learn how AI foundation models change the game for training AI/ML from IBM Research AI VP Sriram Raghavan and Darío Gil, SVP and Director of IBM Research as they demystifies the technology and shares a set of principles to guide your generative AI business strategy. Experience watsonx, IBM’s new data and AI platform for generative AI and learn about the breakthroughs that IBM Research is bringing to this platform and to the world of computing. and to explore foundation models, an emerging approach to machine learning and data representation. Even in the age of big data when AI/ML is more prevalent, training the next generation of AI tools like NLP requires enormous data, and using AI models to new or different domains may be tricky. A foundation model can consolidate data from several sources so that one model may then be used for various activities. But how will foundation models be used for things beyond natural language processing? Don't miss this episode to explore how foundation models are a paradigm shift in how AI gets done.

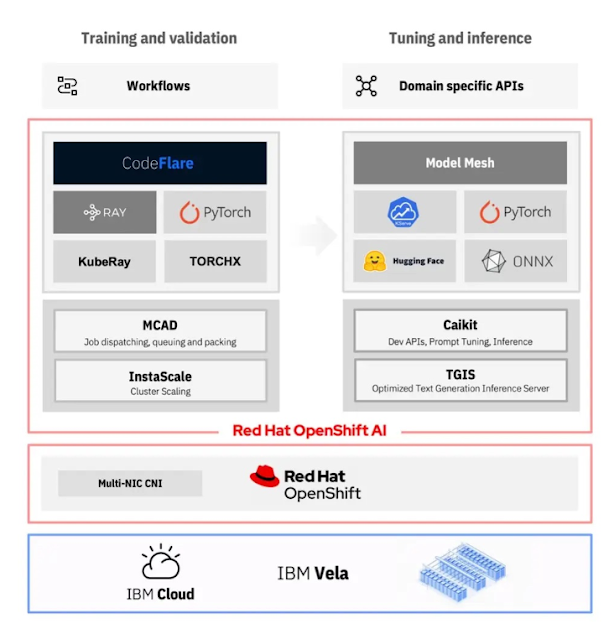

You can bring your own data and AI models to watsonx or choose from a library of tools and technologies. You can train or influence training (if you want), then you can tune, that way you can have transparency and control over governing data and AI models. You can prompt it too. Instead of only one model, you can have family of models. The foundation models trained with your own data will become more valuable asset. Watsonx is a new integrated data platform to become a value creator. It consists of 3 primary parts, first watsonx.data is massive curated data repository that is ready to be tapped to train and fine-tune models with data management system. Watsonx.ai is an enterprise studio to train, validate, tune and deploy traditional ML and foundation models that provide generative AI capabilities. Watson.governance is a powerful set of tools to ensure your AI is executing responsibly. They work together seemlessly throughout the entire lifecycle of foundation models. Watsonx built on top of RedHat Openshift. The lifecycle consists of

STEP 1: preparing our data [Acquire, filter and pre-process, version & tag]. Each data set after being filtered , processed , it receives a data card. Data card has name and version of pile, specifies its content and filters that have been applied to it. We can have multiple data piles . They co-exists in .data and access different versions of data maintained for different purpose is managed seamlessly.

STEP2 : using it to train the model, validate the model, Tune the model and deploying applications and solutions. So we moved from .data to .AI and start picking a model architecture from the five families that IBM provides. These are bedrocks of models and they range from encoder only, encoder-decoder, decoder only and other novel architectures.

What Are Foundation Models? . Foundation models are AI neural networks trained on massive unlabeled datasets to handle a wide variety of jobs from translating text to analyzing medical images. We're witnessing a transition in AI. Systems that execute specific tasks in a single domain are giving way to broad AI that learns more generally and works across domains and problems. Foundation models, trained on large, unlabeled datasets and fine-tuned for an array of applications, are driving this shift. The models are pre-trained to support a range of natural language processing (NLP) type tasks including question answering, content generation and summarization, text classification and extraction. Future releases will provide access to a greater variety of IBM-trained proprietary foundation models for efficient domain and task specialization.

|

| Source |

Foundation models are trained with massive amounts of data that allow for generative AI capabilities with a broad set of raw data that can be applied to different tasks, such as natural language processing. Instead of one model built solely for one task, foundation models can be adapted across a wide variety of different scenarios, summarizing documents, generating stories, answering questions, writing code, solving math problems, synthesizing audio. A year after the group defined foundation models, other tech watchers coined a related term — generative AI. It’s an umbrella term for transformers, large language models, diffusion models and other neural networks capturing people’s imaginations because they can create text, images, music, software and more.

IBM has planned to offer a suite of foundation models, for example smaller encoder based models, but also encoder-decoder or just decoder based models.

|

| source |

Watsonx is our enterprise-ready AI and data platform designed to multiply the impact of AI across your business. The platform comprises three powerful products: the watsonx.ai studio for new foundation models, generative AI and machine learning; the watsonx.data fit-for-purpose data store, built on an open lakehouse architecture; and the watsonx.governance toolkit, to accelerate AI workflows that are built with responsibility, transparency and explainability. It consists of Watsonx.data, Watsonx.ai and Watsonx.governance

|

| Source |

Watsonx.ai Studio: is an AI studio that combines the capabilities of IBM Watson Studio with the latest generative AI capabilities that leverage the power of foundation models. It provides access to high-quality, pre-trained, and proprietary IBM foundation models built with a rigorous focus on data acquisition, provenance, and quality. watsonx.ai is user-friendly. It’s not just for data scientists & developers, but also for business users. It provides a simple, natural language interface for different tasks. Watsonx.ai Studio with the new playground including easy to use Prompt Tuning. With watsonx.xi, you can train, validate, tune and deploy AI models.

WatsonX.governance : IBM has described watsonX.governance as a tool for building responsible, transparent and explainable AI workflows. According to IBM, watsonx.governance will also enable customers to direct, manage and monitor AI activities, map with regulatory requirements, and address ethical issues. The more AI is embedded into daily workflows, the more you need proactive governance to drive responsible, ethical decisions across the business. Watsonx.governance allows you to direct, manage, and monitor your organization’s AI activities, and employs software automation to strengthen your ability to mitigate risk, manage regulatory requirements and address ethical concerns without the excessive costs of switching your data science platform—even for models developed using third-party tools.

|

| Source |

Why we built an AI supercomputer in the cloud?. Introducing Vela, IBM’s first AI-optimized, cloud-native supercomputer.

IBM built Vela supercomputer designed specifically for training so-called “foundation” AI models such as GPT-3. According to IBM, this new supercomputer should become the basis for all its own research and development activities for these types of AI models.IBM’s Vela supercomputer uses x86-based standard hardware. In the Vela system, each node’s hardware consists of a pair of “regular” Intel Xeon Scalable processors. To this are added eight 80GB Nvidia A100 GPUs per node. Furthermore, each node within the supercomputer is connected to several 100 Gbps Ethernet network interfaces. Each Vela node also has 1.5TB of DRAM internal memory and four 3.2TB NVMe drives for storage.In addition, IBM has also built a new workload-scheduling system for the Vela, the MultiCluster App Dispatcher (MCAD) system. This should handle cloud-based job scheduling for training foundation AI models.

Multi-Cluster Application Dispatcher:

The multi-cluster-app-dispatcher is a Kubernetes controller providing mechanisms for applications to manage batch jobs in a single or mult-cluster environment. The multi-cluster-app-dispatcher (MCAD) controller is capable of (i) providing an abstraction for wrapping all resources of the job/application and treating them holistically, (ii) queuing job/application creation requests and applying different queuing policies, e.g., First In First Out, Priority, (iii) dispatching the job to one of multiple clusters, where a MCAD queuing agent runs, using configurable dispatch policies, and (iv) auto-scaling pod sets, balancing job demands and cluster availability.

What is prompt-tuning?

Prompt-tuning is an efficient, low-cost way of adapting an AI foundation model to new downstream tasks without retraining the model and updating its weights. Redeploying an AI model without retraining it can cut computing and energy use by at least 1,000 times, saving thousands of dollars. With prompt-tuning, you can rapidly spin up a powerful model for your particular needs. It also lets you move faster and experiment.

In prompt-tuning, the best cues, or front-end prompts, are fed to your AI model to give it task-specific context. The prompts can be extra words introduced by a human, or AI-generated numbers introduced into the model's embedding layer. Like crossword puzzle clues, both prompt types guide the model toward a desired decision or prediction. Prompt-tuning allows a company with limited data to tailor a massive model to a narrow task. It also eliminates the need to update the model’s billions (or trillions) of weights, or parameters. Prompt-tuning originated with large language models but has since expanded to other foundation models, like transformers that handle other sequential data types, including audio and video. Prompts may be snippets of text, streams of speech, or blocks of pixels in a still image or video. We don’t touch the model. It’s frozen.

For Example: How do AI art generators work?

AI art generators don’t know what an owl looks like in the wild. They don’t know what a sunset looks like in a physical sense. They can only understand details about features, patterns, and relationships within the datasets they’ve been trained on. Prompting for a “beautiful face” is not very helpful. It is more effective to prompt for specific features such as symmetry, big lips, and green eyes. Even if the bot doesn’t understand beauty, it can recognize the features you describe as beautiful and generate something relatively accurate. To get the best results from your AI art generator prompt, you’ll need to give clear and detailed instructions. An effective AI art prompt should include specific descriptions, shapes, colors, textures, patterns, and artistic styles. This allows the neural networks used by the generator to create the best possible visuals.

T5 (Text to test transfer transformer ) is an encoder decoder model pre trained on a multi-task mixture of unsupervised and supervised tasks. We have complete transformer. T5 provides simple way to train a single model on a wide variety of text tasks. FLAN is Fine-Tuning LANguage Model. FLAN already been fine tuned by google and you try your multiple tasks on already pre tuned Model by Google. If you fine tune, then you may destroy that fine tuned model by overwriting it. Flan-UL2 is an encoder decoder model based on the T5 architecture. It uses the same configuration as the UL2 model released earlier last year. It was fine tuned using the “Flan” prompt tuning and dataset collection. With its impressive 20 billion parameters, Flan-UL2 is a remarkable encoder-decoder model with exceptional performance. UL2 20B: An Open Source Unified Language Learner.In “Unifying Language Learning Paradigms”, we present a novel language pre-training paradigm called Unified Language Learner (UL2) that improves the performance of language models universally across datasets and setups. UL2 frames different objective functions for training language models as denoising tasks, where the model has to recover missing sub-sequences of a given input. During pre-training it uses a novel mixture-of-denoisers that samples from a varied set of such objectives, each with different configurations. We demonstrate that models trained using the UL2 framework perform well in a variety of language domains, including prompt-based few-shot learning and models fine-tuned for down-stream tasks. Additionally, we show that UL2 excels in generation, language understanding, retrieval, long-text understanding and question answering tasks.

Retrieval Augmented Generation (RAG):

Foundation models are usually trained offline, making the model agnostic to any data that is created after the model was trained. Additionally, foundation models are trained on very general domain corpora, making them less effective for domain-specific tasks. You can use Retrieval Augmented Generation (RAG) to retrieve data from outside a foundation model and augment your prompts by adding the relevant retrieved data in context. For more information about RAG model architectures, see Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks.

With RAG, the external data used to augment your prompts can come from multiple data sources, such as a document repositories, databases, or APIs. The first step is to convert your documents and any user queries into a compatible format to perform relevancy search. To make the formats compatible, a document collection, or knowledge library, and user-submitted queries are converted to numerical representations using embedding language models. Embedding is the process by which text is given numerical representation in a vector space. RAG model architectures compare the embeddings of user queries within the vector of the knowledge library. The original user prompt is then appended with relevant context from similar documents within the knowledge library. This augmented prompt is then sent to the foundation model. You can update knowledge libraries and their relevant embeddings asynchronously.

Pre-requisites: data sets

1) Training data set ( contains question and answer )

2) Test data set

embedding generation -----> storing it in vector data base ---> giving a user question ----> convert it into embedding --->sending it to vector database ---> getting an answer ---> Finally creating a prompt ---->sending it to foundation model Flan-UL2 (encoder-decoder Model) ---> getting an answer